What Do People Really Think About AI? It Depends on What They Think "AI" Means

When two major polls on AI got different results, we found out why — and what that tells us about the state of AI public opinion.

Public opinion on AI is all over the map. A 2025 Ipsos poll found Americans nearly split, 42% said AI’s benefits outweigh the costs, 41% said the reverse. A Pew survey from the same year told a different story. In their poll, 50% said they were concerned about AI, while only 10% said they were excited. The recently released Stanford AI Index shows large gaps between what the public thinks about AI, and what experts think. Yet, researchers, journalists, and policymakers are all citing these poll results as if they’re measuring the same thing. They’re not.

We replicated one of these polls on 500 census-matched U.S. adults and used AI-assisted interviews to find out what respondents were actually thinking when they answered. What we found explains the contradiction, and raises serious questions about how standard surveys are shaping the policy conversation around AI. People aren’t evaluating the same “AI” when they answer questions.

Headline Says: Two major polls, conducted in the same year, reach different conclusions about public opinion on AI. Americans evenly split on whether AI has more benefits than costs (Ipsos, 2025) vs. Far more Americans are concerned about AI than excited (Pew, 2025)

Survey Says: Ipsos asked whether “products and services using artificial intelligence have more benefits than drawbacks” and found Americans nearly split. Pew framed the question around emotions (concerned vs. excited) and found concern outpacing excitement five to one. Both results were reported as definitive reads on where the American public stands.

Second Raven Says: Both surveys are measuring something important, but neither is capturing what their headlines claim. When we asked the same Ipsos question and used an AI interviewer to find out what people were thinking about when they answered, we found that the topline number conceals a fundamental divide, people reasoning about AI through their own daily experience gave almost exactly opposite answers from people reasoning about AI’s effects on society. Standard surveys blur this divide when they ask for a verdict on “AI” as if it were a single stable concept rather than a word each respondent fills in from their own experiences, concerns, and mental models. Everyone is answering a version of the question, just not the same one.

Our Analysis:

On October 30, 2025, we asked 500 U.S. adults the Ipsos question: “Products and services using artificial intelligence have more benefits than drawbacks.” Our topline looked familiar: 50% agreed, 20% disagreed. Then our AI interviewer asked them what they were actually picturing when they answered.

The variation in interpretation was substantial. Most respondents were thinking primarily of generative AI tools like ChatGPT or Gemini. Others thought of virtual assistants, search algorithms, fitness trackers, or medical tools. A share was thinking of AI in abstract or futuristic terms disconnected from anything they currently use. Standard surveys flatten all of this into a single percentage.

But the more consequential divide wasn’t which AI people thought of. It was the frame they used to evaluate it. Most respondents evaluated AI through the lens of individual experience, or when does it help me find information, get work done, save time? Only 36% considered at least some societal implications like job displacement, privacy, the concentration of AI’s benefits among large corporations.

Those two groups gave nearly opposite answers.

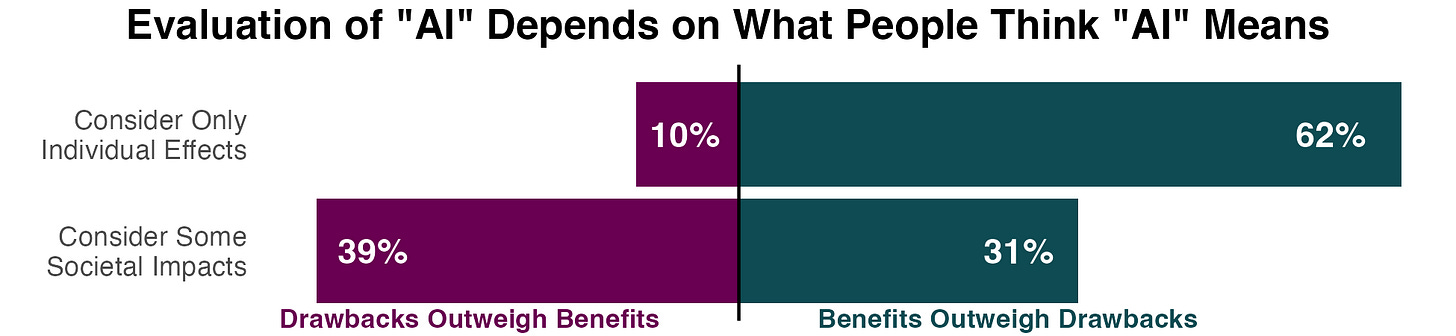

Among those reasoning only about individual effects, 62% said benefits outweigh drawbacks. Just 10% disagreed. Among those who factored in societal impacts, the result flipped: only 31% said benefits outweigh drawbacks, while 39% said the opposite.

Same question. Same day. Opposing conclusions. Outcomes were determined entirely by what the respondent was thinking about when they read the word “AI.”

What Each Group Is Actually Reasoning About:

The individual effects optimists aren’t uninformed. They’re answering a reasonable question: is the AI I interact with more useful than harmful? For most people right now, the honest answer is yes. ChatGPT helps with drafts. ClaudeCode writes more accurate functions. Better autocorrect tools write better sentences and catch typos. These are all legitimate considerations but it’s not an evaluation of AI’s societal trajectory.

Conversely, the “societal effects skeptics” aren’t luddites. In our interviews, their concerns were specific and grounded. Job displacement came up most frequently. For many respondents, AI’s economic impact is not abstract worry about automation but concrete concern about identifiable industries and people. Privacy and surveillance were close behind, often tied to personal experiences that felt intrusive. Several respondents raised the distribution of AI’s gains, worried that the benefits were accruing to large platforms and already advantaged actors while the risks spread more broadly. Misinformation and electoral manipulation came up repeatedly. These concerns map closely to what AI researchers, labor economists, and democratic theorists are raising in the academic literature.

Why This Matters for Policy

Anyone reading favorable AI toplines as a stable baseline should look carefully at what’s holding them up. Most people think about it in terms familiar from daily life, and mostly see “AI” as a positive. But those who consider the societal effects of AI are much more skeptical. As more Americans weigh those impacts, we should expect the topline numbers to shift. That process will be impeded, however, by media reports of a seemingly unperturbed public based on survey questions we poorly understand.

That foundation shifts as AI becomes more visible in high-stakes domains like, hiring, credit, policing, elections and media. As those experiences accumulate and societal impacts become more salient, more respondents will shift from the individual frame to the societal frame. Based on our data, that shift comes with a sharp reversal in net opinion.

The stakes of getting this wrong are not abstract. The 2026 Stanford AI Index documents a 50 point gap between expert and public optimism on AI’s impact on jobs — 73% of experts expect a positive impact compared to just 23% of the public. Similar gaps appear in AI concerns in the economy and healthcare. These numbers might be perceived as evidence of public ignorance or expert arrogance, but our findings suggest a different interpretation: experts and the public might be reasoning about different things entirely. A polling apparatus that cannot distinguish between these frames will keep producing gaps that demand explanation, then failing to provide one.

The Ipsos and Pew contradiction isn’t an anomaly. It’s a predictable feature of a methodology that was not designed to capture this kind of interpretive variation. When media reports present these toplines as definitive public opinion, they give policymakers a false foundation. This systematically underweights the concerns of the people most attentive to AI’s democratic implications.

Better surveys that specify what kind of AI is being evaluated, at what scale, with what stakes would help. Generative AI reached population adoption faster than the personal computer or the internet. When policymakers mistake conceptual confusion in survey responses for settled public values, regulatory frameworks get built on unstable foundations. The kind of AI-assisted interviews we used here, which let respondents show us what they’re reasoning about before we start counting. Until we ask better questions, polls on AI will keep generating headlines that confuse the debate more than they clarify it.

Interesting variation in associations and individual / social frames respondents reported back. Have a look on this one on the policymakers / regulators, where imagination apparently plays a big role too https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6289639. I suspect there is fundamental gap between the effects of hands on individual AI experience, and the imagined motivators of "collective concern" policy narratives.